Ingestion & Connectivity

Hiring managers want resumes that prove you can move data without losing it. Fivetran, Airbyte, Kafka Connect, custom CDC, and REST/GraphQL extractors built with retry, idempotency, and schema-drift handling.

By a former Google recruiter

Clients got hired at

Data engineering is unusual: the role spans junior pipeline engineers shipping their first dbt model all the way up to Principal Data Platform architects designing multi-petabyte lakehouses. Generic resume services treat it like backend-with-some-SQL, and that's exactly why recruiters drop data engineering resumes at the summary line.

The TechieCV tech resume writing process is built to speak the vocabulary: pipeline orchestration, dimensional modeling, dbt and warehouse design, streaming ingestion, data quality contracts, and warehouse cost engineering. That's what I write. That's the only thing I write. Pricing and packages are listed on a separate page; this one is about the work itself.

2026 market note: Data engineering hiring has rotated hard since the AI buildout. Recruiters now expect dbt fluency on every senior+ resume, real lakehouse depth (Iceberg, Delta, Hudi), and a coherent stance on streaming versus batch tradeoffs. Generic “wrote ETL pipelines” bullets no longer clear the Phase-1 recruiter screen.

From hands-on Analytics Engineers to Principal Data Platform architects, this service writes resumes across the full data engineering spectrum. If you land anywhere in the buckets below, you're in the right place.

Every Data Engineer resume that lands in a recruiter's queue gets a similar scan. Below is the type of checklist I used at Google and still use on every resume I write. Miss a few and your resume gets rejected.

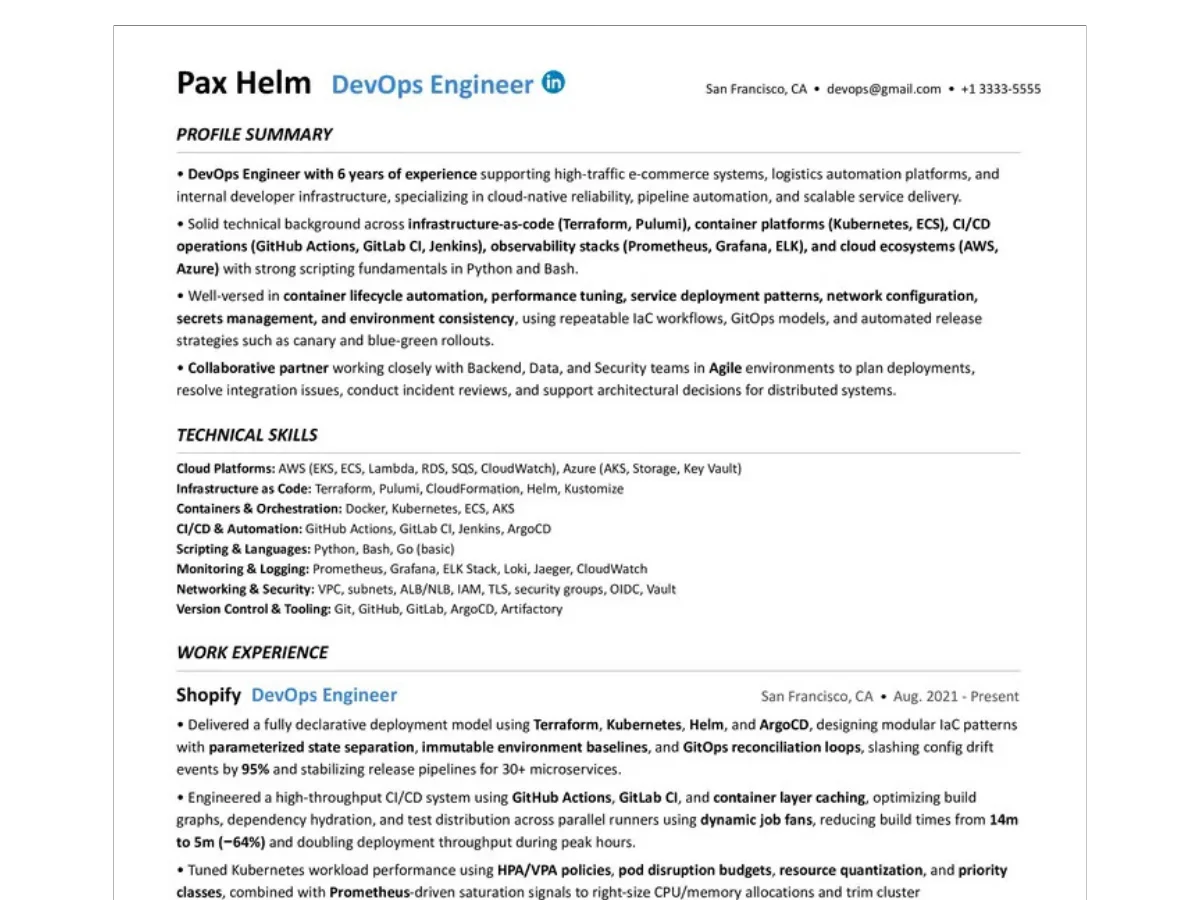

The top 3 to 4 lines. The recruiter checks in a glance that the target role, seniority, and warehouse / orchestration stack are all legible before scrolling.

Does the work experience cover the Data Engineer Role Profile? Ingestion, modeling, transformation, orchestration, quality, serving: ticked off or flagged as gaps.

Clustered, labelled, and calibrated to the target warehouse stack. Raw lists of 50 tools get ignored. Curated sub-sections (warehouse, orchestration, streaming, quality) get read.

Pipeline reliability %, freshness SLAs hit, warehouse cost cuts, model build time, downstream consumer count. If there are no numbers, the recruiter can't tell a senior Data Engineer from a SQL operator.

Scope of influence, data contract decisions, cross-team partnership with analytics and ML, on-call ownership of pipelines. Visible without the recruiter having to hunt for it.

Is the weighting Data Engineer, Analytics Engineer, or Data Platform Engineer, or a carpet-bombing of every buzzword? Recruiters read for intentional application.

Comparable data domains (fintech regulatory reporting, ad-tech event scale, marketplace economics, healthcare PHI). Domain-fluent Data Engineers get read first; generalists get benchmarked against them.

Named, defensible work: the lakehouse migration, the warehouse cost rewrite, the streaming cutover that became a case study. Vague “helped build the data pipeline” bullets get filtered. Specific projects with scope and outcome get remembered.

Twelve competencies a Data Engineering hiring manager scans for, mapped to the eight pipeline stages and the four cross-cutting foundations. Built from screening hundreds of Data and Analytics Engineers at Google.

Hiring managers want resumes that prove you can move data without losing it. Fivetran, Airbyte, Kafka Connect, custom CDC, and REST/GraphQL extractors built with retry, idempotency, and schema-drift handling.

Companies care about which side of the warehouse vs lakehouse line you sit on. Iceberg, Delta, Hudi, Parquet, partitioning strategy, table layout choices that survived under real query load.

You need to demonstrate dimensional modeling judgment, not just SQL fluency. Star, snowflake, Data Vault, slowly changing dimensions, and the tradeoffs you actually made under stakeholder pressure.

To convince recruiters, prove you can ship trustworthy SQL at scale. dbt models, incremental materializations, macros, exposures, and performance-tuned warehouse SQL that downstream teams actually trust.

Hiring managers want DAG ownership, not just task authoring. Airflow, Dagster, Prefect, dbt Cloud. Retry semantics, backfill strategy, dependency graph design, and on-call discipline when pipelines page at 3am.

You need to prove downstream teams can trust your data. dbt tests, Great Expectations, contract tests, freshness SLAs, and observability tooling (Monte Carlo, Soda) wired into the pipeline, not bolted on.

Companies promote engineers who make the warehouse fast for consumers. Semantic layers (LookML, Cube, dbt Semantic Layer), materialized views, clustering, partition pruning, and query performance tuning that finance noticed.

FinOps and governance signal is increasingly the differentiator. Data contracts, PII handling, lineage (OpenLineage, DataHub), and warehouse cost cuts on Snowflake, BigQuery, or Databricks that you can actually defend.

Eight stages, two lanes. Lane 1 covers the source-to-warehouse path; Lane 2 covers serving the warehouse to the rest of the company. Calibrated from 2026 hiring data.

Production warehouse experience, not sandbox accounts: Snowflake, BigQuery, Databricks, or Redshift at organizational scale. Multi-warehouse strategy, RBAC design, and managed-service tradeoffs are what separate seniors from operators.

Spark, Flink, Trino, and Presto at production scale: shuffle tuning, broadcast joins, partition pruning, and Iceberg / Delta table maintenance. Toy notebooks do not count. Cluster cost discipline does.

Kafka, Kinesis, Flink, and CDC pipelines that run in prod: exactly-once semantics, schema registry discipline, watermark handling, and the specific moment you (or didn't) choose streaming over batch and the reason it paid off.

Python and SQL fluency that goes beyond literacy: performant SQL, Python or Scala for pipeline code, dbt internals, Airflow plugin authoring, and the tooling judgment to know when to script versus when to reach for a managed service.

Each bullet on your resume is rebuilt using my 5-Level System: from a basic task description (Level 1) to a hiring-manager-grade signal combining engineering techniques, tech stack, methodology, and quantified impact (Level 5).

Level 1 Task only

Built data pipelines and improved query performance for the analytics team.

Level 5 Techniques + Tools + Method + Metric

Re-architected a 280-table Snowflake warehouse onto dbt + Iceberg with incremental materializations and cluster-key partitioning, replacing handwritten ELT scripts and ad-hoc Airflow DAGs. Cut nightly build time from 4h 20m to 38m (-86%) and warehouse spend from $34K/mo to $11K/mo (-67%) across 60+ analytics consumers.

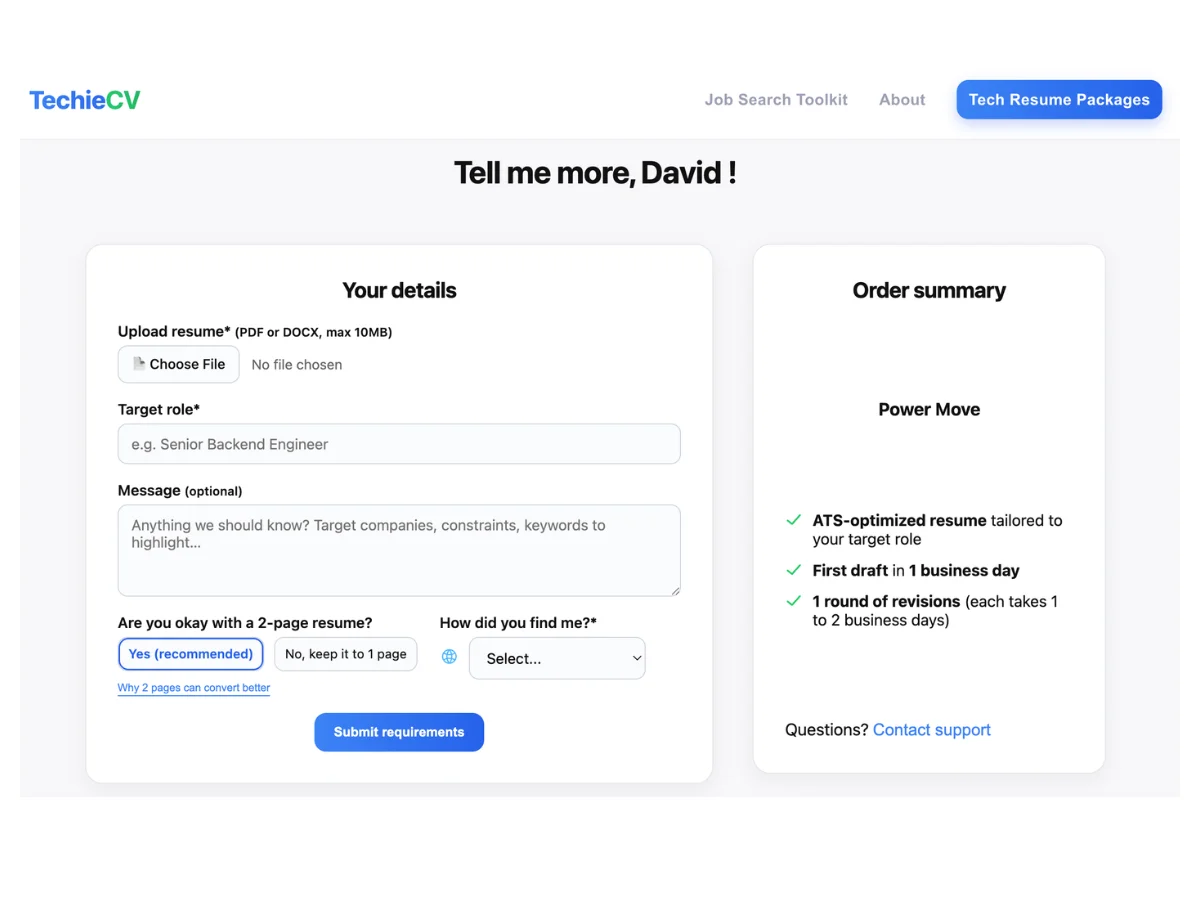

No video calls. No back-and-forth scheduling. Just a clear, structured process that happens in writing, moves at your pace, and keeps you in control at every step.

Working in writing is a deliberate choice. Google Docs lets us attach comments to specific bullets, technical terms, and individual sections. You can see every change, ask questions in context, and provide input whenever it suits you.

01

I start from your current resume and a short requirements form: your target role, seniority level, and any specific job descriptions you're targeting.

If your resume isn't up to date or there's context it doesn't include yet, you can add a brain dump document. No formatting required, just write whatever comes to mind.

You also get direct email access throughout the entire process, so no questions are left unanswered!

02

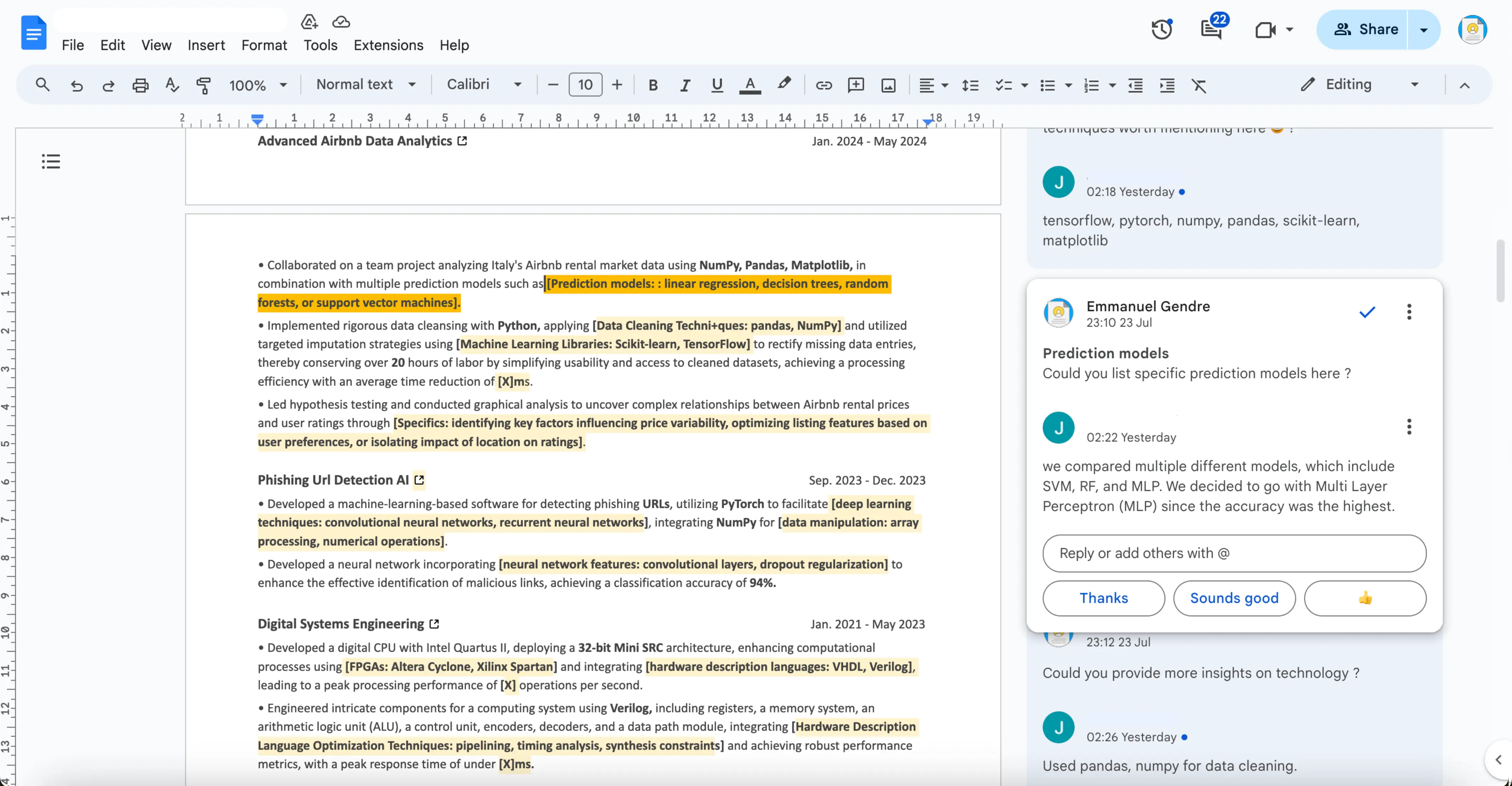

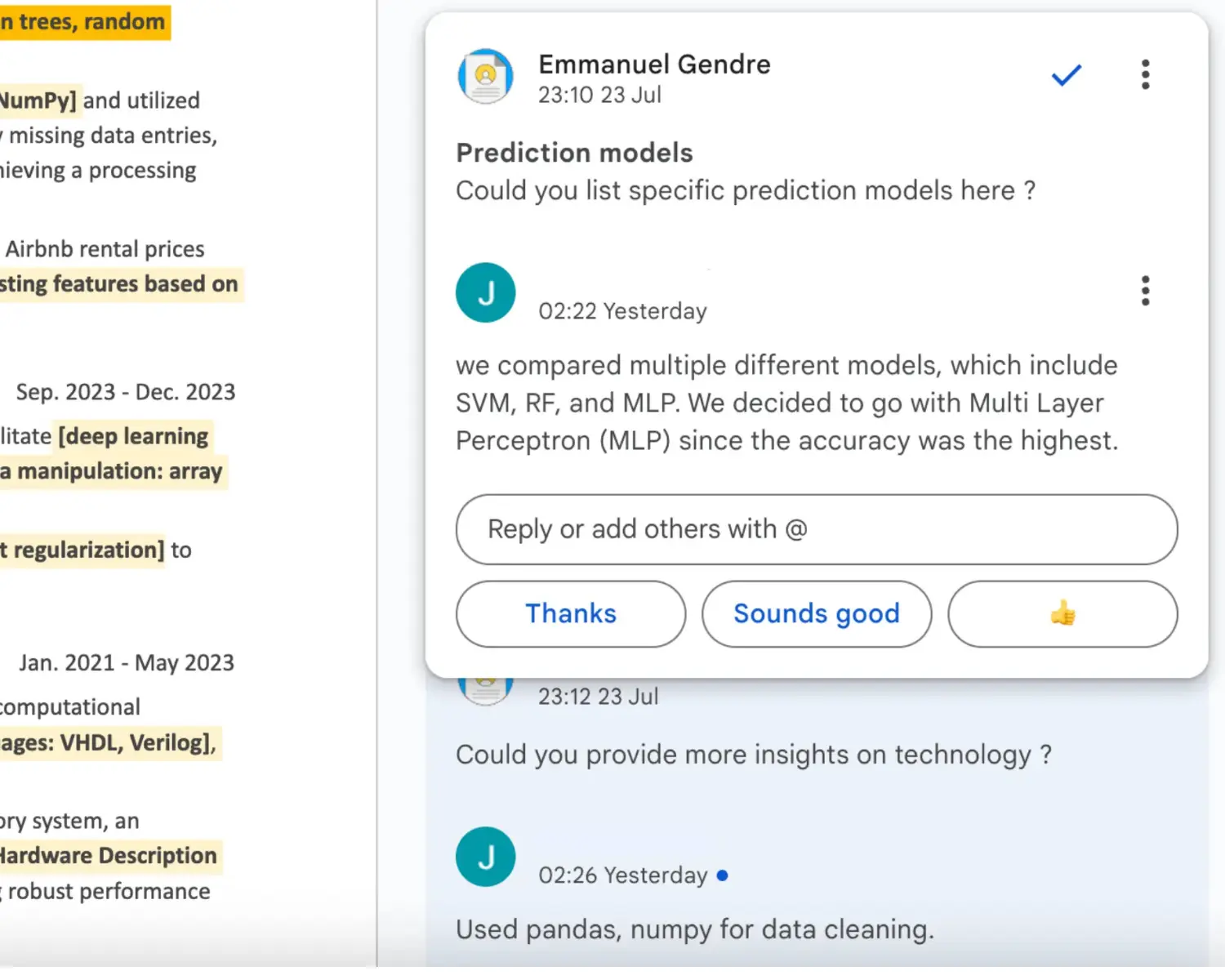

Your resume is rewritten entirely and delivered as a shared Google Doc. Not edited. Not cleaned up. Rewritten from scratch.

The draft includes comments throughout explaining specific decisions: why a bullet was restructured, why a section was added, what a recruiter is looking for in that specific area.

Placeholders flag suggestions for technical depth: specific tools, architectural patterns, engineering techniques, metrics, etc... so you know exactly what to fill in and why it matters.

Power Move clients receive their first draft within 1 business day.

03

Take as much time as you need. The comments in the doc lay out clearly how to respond to each suggestion. You can edit directly in the document, reply via comment, ask questions, or add more context. There's no right or wrong way to engage. Some clients write paragraphs, others leave one-line notes. Both work.

This is the part most clients don't expect. Responding to specific technical questions about your own work tends to surface things you'd forgotten, undervalued, or never thought to include. Most clients say this is where the real material surfaces: accomplishments they'd forgotten, impact they'd undervalued, or context they never thought belonged on a resume.

04

Once you have provided your input, the final version is delivered within 1 business day. Climb The Ladder and Power Move clients get unlimited revisions for 30 days from the date of first delivery, so we can iterate as many times as needed until the resume is exactly right.

Step 1 of 4: Share Your Requirements

Recent Data, Analytics, and Platform offers landed by TechieCV clients.

Dates are

when the offer was signed.

| Company | Position | Offer signed |

|---|---|---|

|

Airbnb

|

Sr. Data Engineer | Mar 2026 |

|

Stripe

|

Senior Analytics Engineer | Jan 2026 |

|

Datadog

|

Staff Data Platform Engineer | Nov 2025 |

|

Shopify

|

Data Engineer | Aug 2025 |

|

Cloudflare

|

Streaming Data Engineer | May 2025 |

|

Meta

|

Senior Data Engineer | Feb 2025 |

Placements reflect signed offers. Client identities are kept confidential.

Upload your resume. I'll send back a recruiter-grade assessment within 12 hours. No charge, no catch.

Google-level recruiter screen + clear grading & checklist, on your Data Engineer resume.

Yes, all three. They overlap in tooling but the emphasis differs. Analytics Engineer resumes lean on dbt fluency, semantic layers, and stakeholder partnership. Data Platform Engineer resumes lean on warehouse internals, lakehouse architecture, and cost engineering. Data Engineer resumes sit in between. I tune the summary, skills, and bullet weighting to the exact target.

A Backend resume proves you can ship application logic. A Data Engineer resume proves you can move and shape data so the rest of the company can trust it. Recruiters screen Data Engineer resumes for pipeline reliability, data modeling judgment, freshness SLAs, and warehouse cost discipline, none of which a pure backend resume emphasizes.

Yes, about 55% of my Data Engineer clients are senior+ (Senior, Staff, Principal, Lead Data Platform Engineer). The senior content is very different: less tool enumeration, more architectural judgment, data contract design, cross-team influence, and org-wide cost or governance decisions.

Both. FAANG Data Engineering resumes emphasize scale: petabyte-class warehouses, multi-region pipelines, and strict data contracts. Startup Data Engineer resumes emphasize breadth: you own ingestion through serving, and bullets need to show that range without sounding scattered. I tune the angle to the target.

One page if you're under ~8 years in. Two pages once you're a Staff-level engineer with multiple platform or warehouse milestones worth the space. Length-for-length's-sake hurts you. Recruiters skim in seconds, and padding dilutes your strongest signals. More on this in the resume length guide.